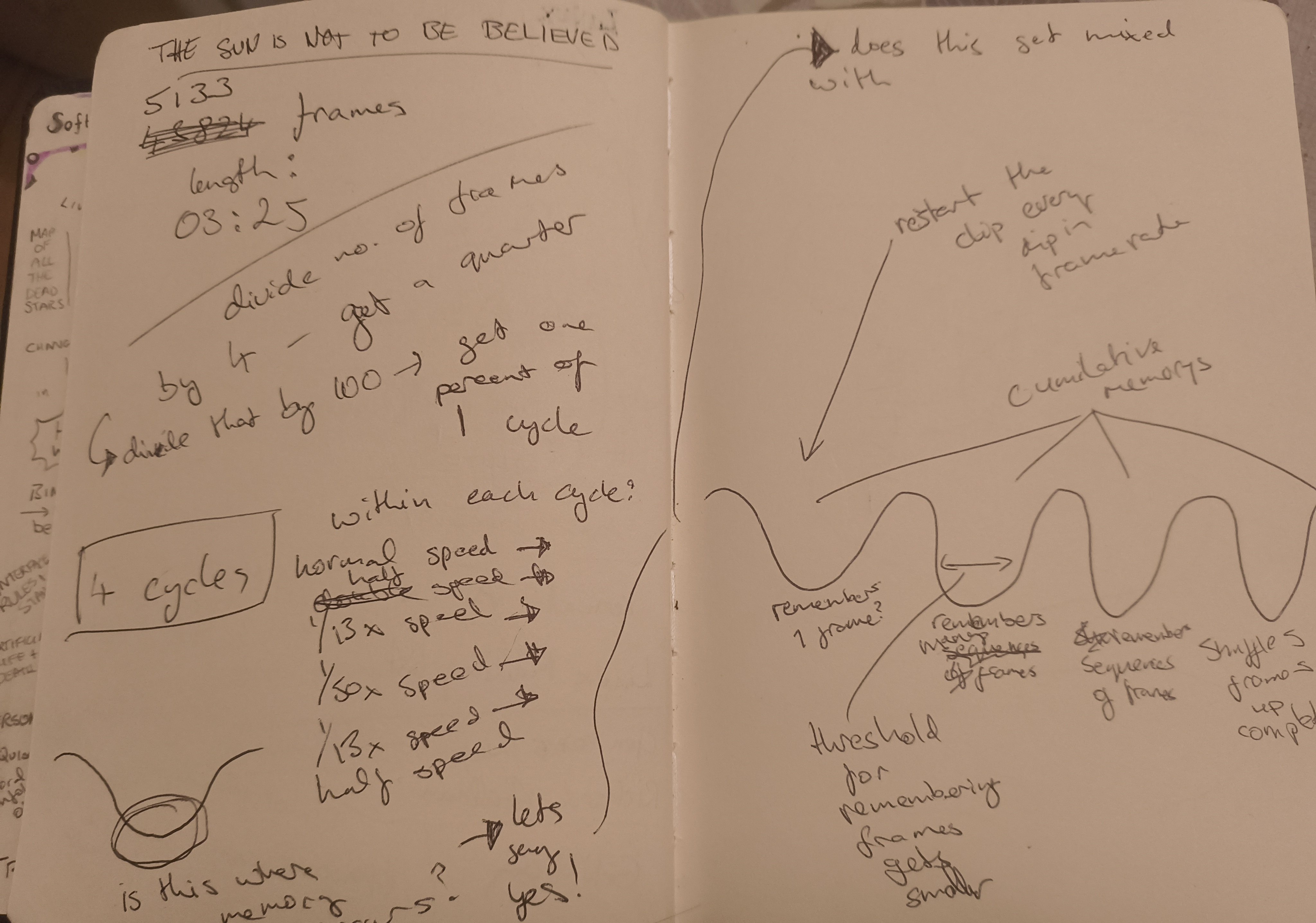

Not a clean timeline. More like: causes → side-effects → accidents.

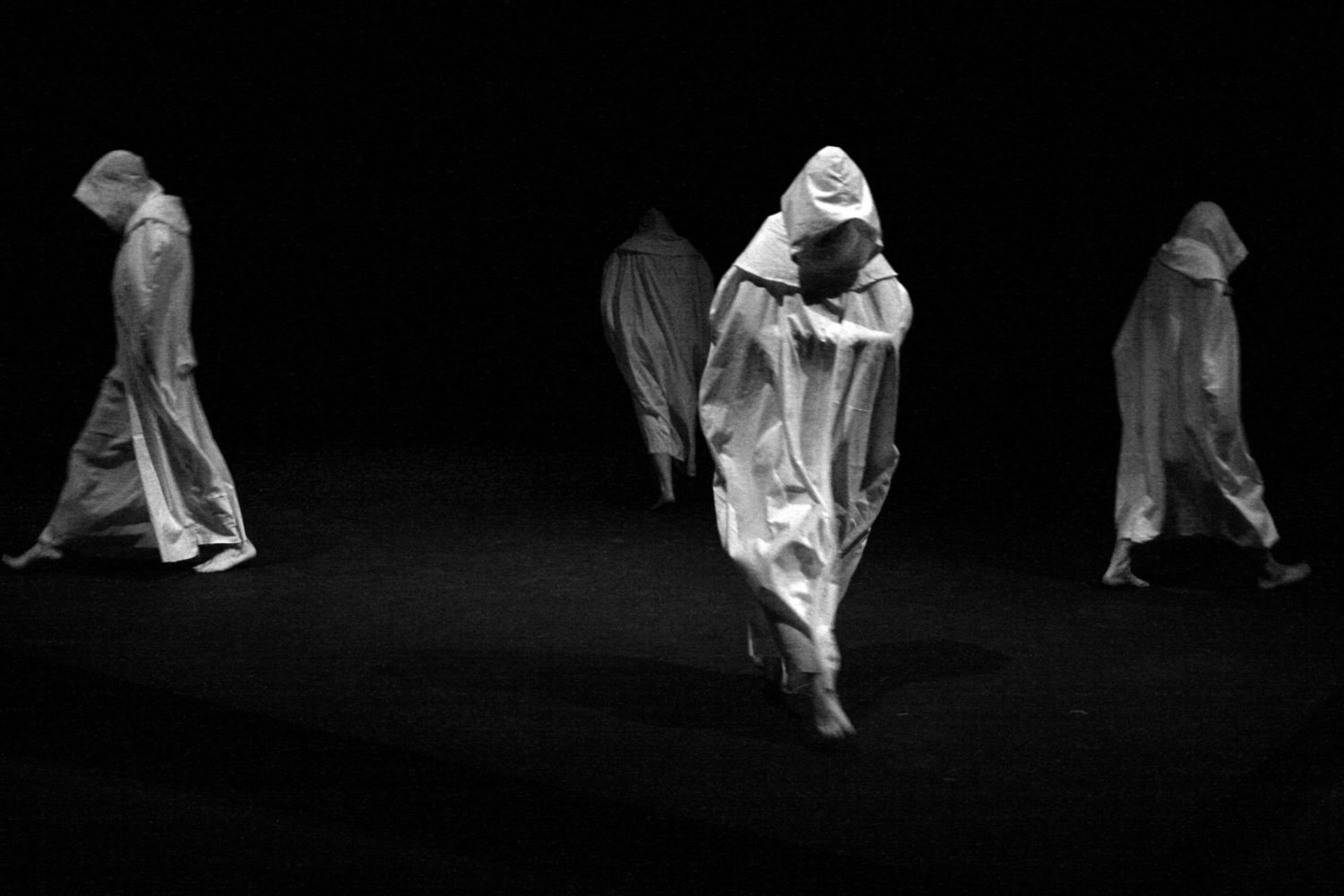

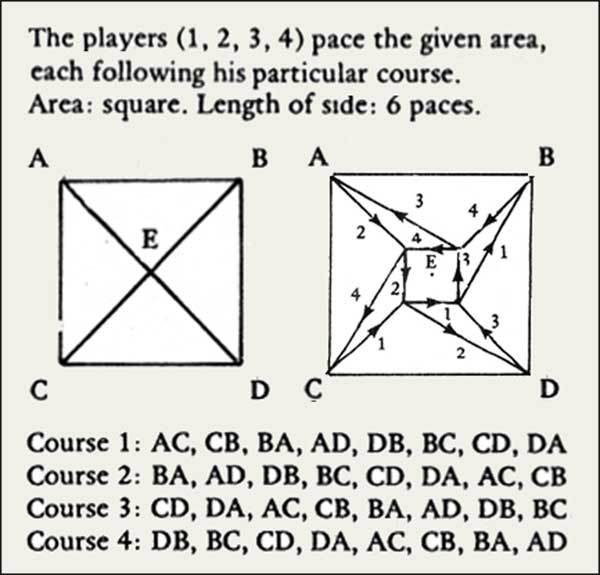

Creative Pathways | Beckett-esque Quad as a structural ghost: repetition, rule-based movement, returning-without-resolution. Constraints, cycles, overlap.

Repetition allows the system to reveal itself.

Filmic structures of duration and movement. Modulations in frame rate let time behave like a plastic material — bending the movement already inside the footage.

// experiments with different algorithms

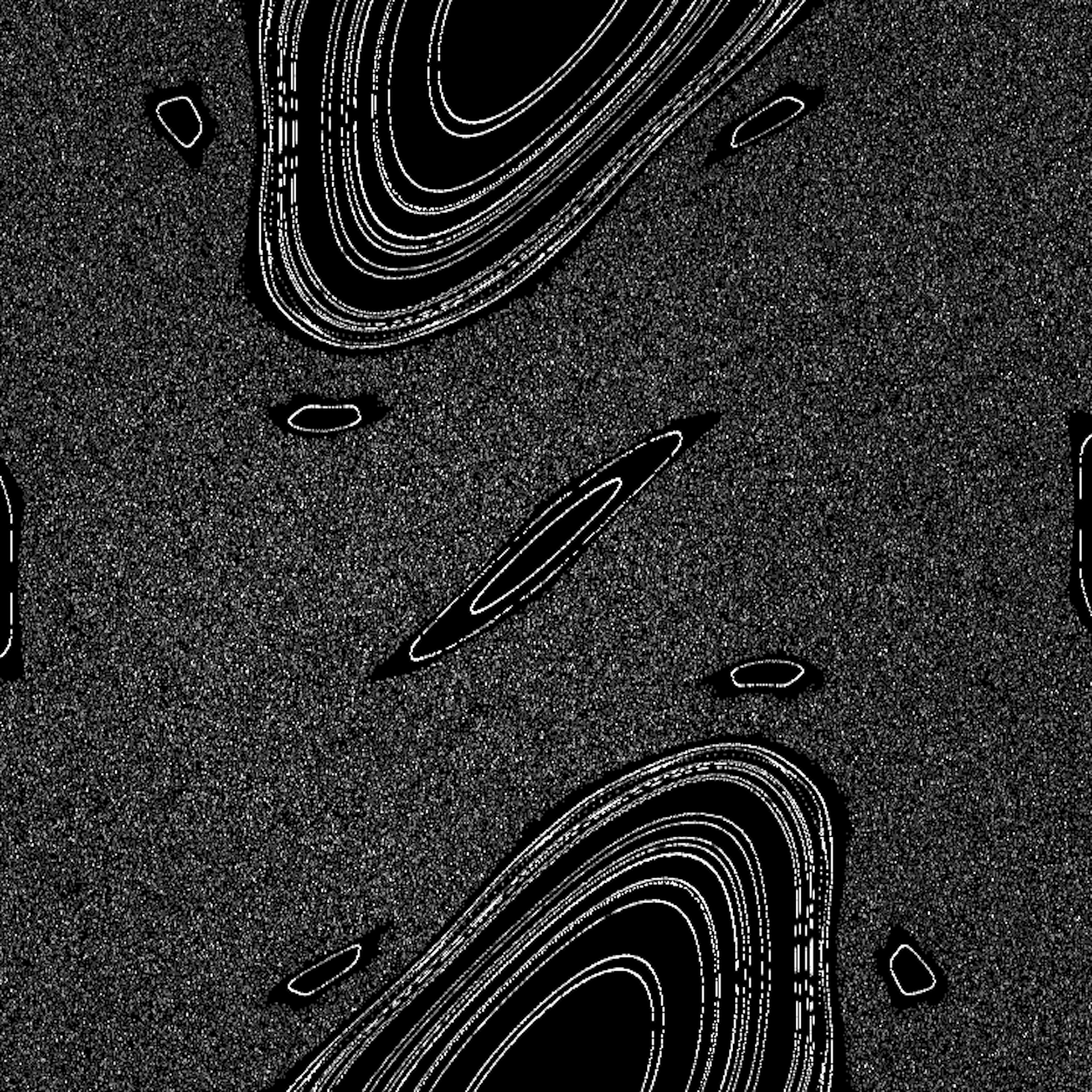

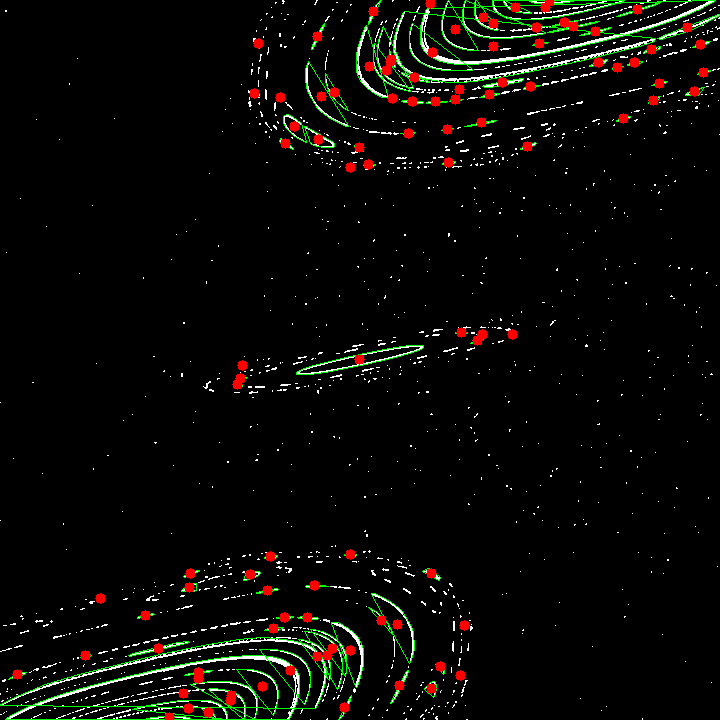

// CHAOS — logistic maps / Hénon map / Lorenz attractor

// L-systems — branching structures

// syncing audio by modulating waveforms in Max/MSPFrankfurt shoot: harsh sun, high contrast, the garden as a score. The cinematography gives the algorithm something to push against: shadow edges, texture, glare, bodies entering/exiting exposure.

(If this doesn’t play: try .mp3 export, or confirm the path is /audio/angryman.wav)

TD patch

brainmap output → CV detection of centroids → weighted frame pool

Chaos came in as a way to avoid generic randomness as some sort of stylistic gesture.. more-so trying to keep it to structural behaviour. A simple attractor becomes a field of bias. It doesn’t decide what to remember; it shapes how the remembering happens.

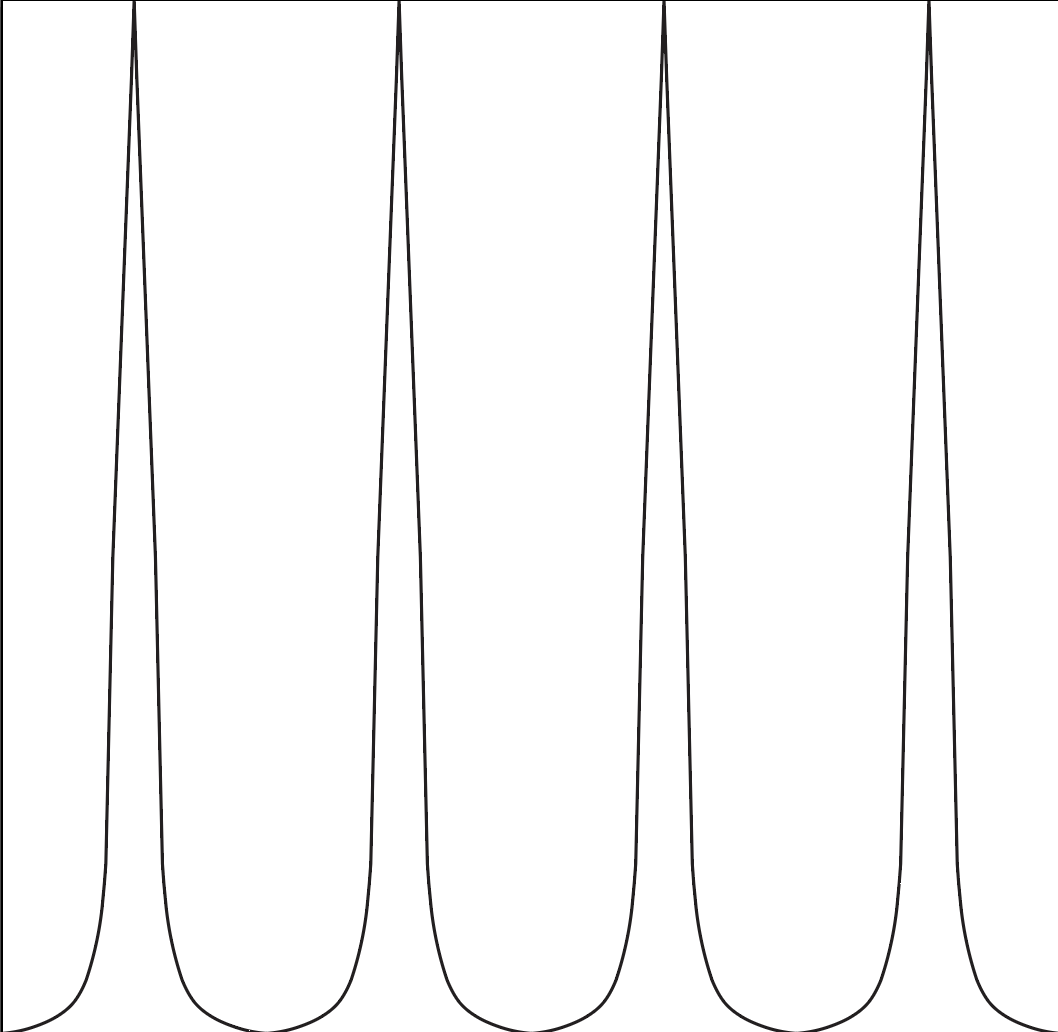

Using SVGs to shape the framerate algorithm felt like drawing a score - a small mediation from the code, closer to making than “optimising”.

def load_speed_function_from_svg(svg_file):

global speed_function

control_points = parse_svg(svg_file)

speed_function = create_speed_function(control_points)

def materialBreakdown(cycle_progress, min_speed=0.025, max_speed=1.0):

normalised_speed = speed_function(cycle_progress)

return normalised_speed * (max_speed - min_speed) + min_speedMoving into TouchDesigner opened the piece: code scripting as choreography, layers as performers, feedback as memory.

def computeCentroids(frameCount):

image = cv2.imread('brainmap.png', cv2.IMREAD_GRAYSCALE)

contours, _ = cv2.findContours(binary, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

centroids = []

for contour in contours:

M = cv2.moments(contour)

if M['m00'] != 0:

cx = int(M['m10'] / M['m00'])

cy = int(M['m01'] / M['m00'])

centroids.append((cx, cy))

all_memory_frames = sorted(set(

int((abs(cx) + abs(cy)) / (2 * max(width, height)) * frameCount)

for cx, cy in centroids

))

return all_memory_framesdef initialise_memory_frames(strong_memory_frames):

for frame in range(int(frameCount)):

memory_frames[frame] = {'weight': 1}

for frame in strong_memory_frames:

memory_frames[frame]['weight'] += 10def select_frame_near_attractors():

weights = np.array([memory_frames[f]['weight'] for f in memory_frames])

cumulative = np.cumsum(weights) / np.sum(weights)

r = random.random()

frame = list(memory_frames.keys())[np.searchsorted(cumulative, r)]

memory_frames[frame]['weight'] *= 1.5

return framedef reinforce_memory():

for frame in memory_frames:

if frame in strong_memory_frames:

memory_frames[frame]['weight'] *= 2

else:

memory_frames[frame]['weight'] *= 0.95Memory ≠ archive. Memory is a process: weights shift, reinforcement happens, forgetting happens. “Remembering” is not retrieval; it’s re-composition under constraints.

the past becomes real in the act of recall

What is a “strong memory”?

Should the memory system get more complex (or simpler)?

How do you show the system without explaining it to death?

Should I show the code / the patch / the textport or just the behaviour?